Relevant Overviews

- Content Strategy

- Online Community Management

- Social Media Strategy

- Content Creation & Marketing

- Digital Transformation

- Blockchain, Crypto, NFTs etc

- Personal Productivity

- Innovation Strategy

- Communications Tactics

- Psychology

- Social Web

- Media

- Politics

- Communications Strategy

- Science&Technology

- Business

- Large language models

Overview: Large language models

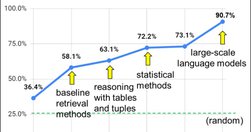

While this overview will eventually reflect everything tagged #llm on this Hub, my current focus is on how LLMs could best support MyHub users in particular and inhabitants of decentralised collective intelligence ecosystems in general (cf. How Artificial Intelligence will finance Collective Intelligence). This is version 1.x, 2023-04-24.

How the hell does this work?

The best explanation of how it works by far comes from Jon Stokes' ChatGPT Explained: A Normie's Guide To How It Works. In essence, "each possible blob of text the model could generate ... is a single point in a probability distribution". So when you Submit a question, you're collapsing the wave function and landing on a point in that probability distribution: a collection of symbols probably related to what you inputted. That collection's content depends "on the shape of the probability distributions ... and on the dice that the ... computer’s random number generator is rolling."

So if you ask whether Schrodinger's Cat is alive or dead, you'll get different answers depending on how you ask the question not because the LLM understands anything about Schrodinger, his cat or quantum mechanics, but because amongst all "possible collections of symbols the model could produce... there are regions in the model’s probability distributions that contain collections of symbols we humans interpret to mean that the cat is alive. And ... adjacent regions ... containing collections of symbols we interpret to mean the cat is dead. ChatGPT’s latent space has been deliberately sculpted into a particular shape by...":

- Training "a foundation model on high-quality data" so it's "like an atom where the orbitals are shaped in a way we find to be useful.

- Fine-tuning "it with more focused, carefully curated training data" to reshape problematic results

- Reinforcement learning with human feedback (RLHF) to further refine the "model’s probability space so that it covers as tightly as possible only the points ... that correspond to “true facts” (whatever those are!)"

The key takeaway here is that ChatGPT is not truly talking to you, it's "just shouting language-like symbol collections into the void". And here's a good example of its spectacularly wrong hallucinations.

What the hell does it mean?

As such it is not "almost AI", it's not even close. According to Noam Chomsky, "The human mind is not... a lumbering statistical engine for pattern matching... True intelligence is demonstrated in the ability to think and express improbable but insightful things.... [and is] also capable of moral thinking", whereas ChatGPT's "moral indifference born of unintelligence... exhibits something like the banality of evil: plagiarism and apathy and obviation", summarising arguments but refusing to take a position because its creators learnt their lesson with Taybot.

Not understanding this is dangerous, as the interview/profile of Emily M. Bender in You Are Not a Parrot And a chatbot is not a human makes clear: "LLMs are great at mimicry and bad at facts... the Platonic ideal of the bullshitter... don’t care whether something is true or false... only about rhetorical power. [So] do not conflate word form and meaning. Mind your own credulity... [we've made] machines that can mindlessly generate text... we haven’t learned how to stop imagining the mind behind it."

Believing an LLM understands what it says is particularly a problem given that it was trained on words written overwhelmingly by white people, with men and wealth overrepresented (see also OpenAI’s ChatGPT Bot Recreates Racial Profiling). Bender is very good on what the safe use of artificial intelligence looks like (TL:DR; ChatGPT ain't it), covering the dehumanising effect of treating LLMs like humans, and humans like LLMs, and asking what happens when we habituate "people to treat things that seem like people as if they’re not”? Won't we all start treating real humans worse?

Answer: we might "lose a firm boundary around the idea that humans... are equally worthy", bordering on fascism: "The AI dream is governed by the perfectibility thesis... a fascist form of the human."

For more on why we must avoid a tiny number of companies dominating the field:

- "We are now facing the prospect of a significant advance in AI using methods that are not described in the scientific literature and with datasets restricted to a company that appears to be open only in name… And if history is anything to go by, we know a lack of transparency is a trigger for bad behaviour in tech spaces” - Everyone’s having a field day with ChatGPT — but nobody knows how it actually works

- "The claims re: ChatGPT by "fascinated evangelists" are simply "a distraction from the actual harm perpetuated by these systems. People get hurt from the very practical ways such models fall short in deployment", while its achievements are presented as independent of its engineers' choices, disconnecting them from human accountability - ChatGPT, Galactica, and the Progress Trap.

How can I use it?

Understanding how it does what it does is key to understanding the answer to this question, so Stokes' Normie's Guide, above, is also good here: "Isn’t the model’s ability to make things up often a feature, not a bug?".

More practically:

- in The Mechanical Professor Ethan Mollick, uni professor, puts ChatGPT through its paces. From my notes: impressive as a teacher, not great as a researcher/academic writer ("Nothing particularly wrong, but also nothing good"), reasonable summariser and general writer ("results were not brilliant, and I wouldn’t vouch for their accuracy"). So while not yet a threat to real academics, ChatGPT is a jobkiller for copywriters, almost everyone on New Grub Street and anyone else spending their days churning out rehashed content.

- learn something: give it a summary of something (or ask it so summarise, but then doublecheck for hallucinations), and then ask it to ask you questions about it and rate your answers. But doublecheck for hallucinations...

Prompt engineering

My reading queue is overflowing with identikit posts on prompt engineering, which I'll get to eventually.

AutoGPT: adding value to LLMs to create focused AI assistants

"AutoGPTs... automate multi-step projects that would otherwise require back-and-forth interactions with GPT-4... enable the chaining of thoughts to accomplish a specified objective and do so autonomously" - something I've already played with in the shape of AgentGTP, and which encapsulates how I'll integrate this into MyHub.ai (next), because this approach "transforms chat from a basic communication tool into ... AI into assistants working for you".

How should it be integrated into MyHub?

How could these LLMs be integrated into tools for thought in general, and (tomorrow's) MyHub.ai in particular? I've always wanted to access AI services from inside the MyHub thinking tool (see Thinking and writing in a decentralised collective intelligence ecosystem), from where it can apply its abilities to one's own notes. But what will that look like?

My first thought: "imagine your own personal AI assistant operating across your content - your public posts, private library (including content shared from friends, stuff in your reading queue, etc.) and the wider web, emphasising the sources you favour (based firstly on your Priority Sources, and then on the number of times you've curated their content)."

In I Built an AI Chatbot Based On My Favorite Podcast, the author shows how to do just that: "It took probably a weekend of effort" to build a chatbot to search his library "of all transcripts from the Huberman Lab podcasts, finds relevant sections and send them to GPT-3 with a carefully designed prompt".

A step-by-step guide to building a chatbot based on your own documents with GPT goes into far more detail.

Pretty soon I'll be able to do something similar, playing with ChatGPT combined with my entire Hub of over 3600 pieces of content. But while the above chatbot answers questions, I'm already pretty sure I don't want to treat ChatGPT like a search engine.

Instead, going into this experiment, ChatGPT as muse, not oracle is my overall starting point, asking "What if we were to think of LLMs not as tools for answering questions, but as tools for asking us questions and inspiring our creativity? ... ChatGPT asked me probing questions, suggested specific challenges, drew connections to related work, and inspired me to think about new corners of the problem."

Other ideas include:

- as a tutor, as in The Mechanical Professor, who "put the text of my book into ChatGPT and asked for a summary ... asked it for improvements... to write a new chapter that answered this criticism... results were not brilliant, and I wouldn’t vouch for their accuracy, but it could serve a basis for writing."

- finding me content on the web based on my interests, as represented by my notes

- auto-categorisation: I've been manually tagging stuff I like since 2002. I have not been consistent in the use of tags. Can ChatGPT help me organise my stuff?

Or should it be integrated at all?

But I'm mindful that I might not find it that useful. Many years ago, for example, I thought I wanted autosummary: click a button and get an autosummary of an article as I put it into my Hub. But that risks robbing me of any chance of learning anything from it.

Moreover, as Ted Chiang points out in ChatGPT Is a Blurry JPEG of the Web, AI should not be used as a writing tool if you're trying to write something original: "Sometimes it’s only in the process of writing that you discover your original ideas... Your first draft isn’t an unoriginal idea expressed clearly; it’s an original idea expressed poorly", accompanied by your dissatisfaction with it, which drives you to improve it. "just how much use is a blurry jpeg when you still have the original?"

Which links to a piece not tagged "llm", where Jeffrey Webber points to a central problem with Tools for Thought: "the word ‘Tool’ first causes us to focus on the tool more than the thinking... to confuse thought as an object rather than thought as a process... obsessed with managing notes, the external indicator of thought, rather than the internal process of thinking... we do less and less of the thinking and more and more of the managing."

Can AI help? Maybe, but would we be as willing to have another human do our thinking?

Relevant resources

Jon Stokes thinks "people are talking about this chatbot in unhelpful ways... anthropomorphizing ... [and] not working with a practical, productive understanding of what the bot’s main parts are and how they fit together."So he wrote this explainer."At the heart of ChatGPT is a large language model (LLM) that belongs to the family of generative ma…

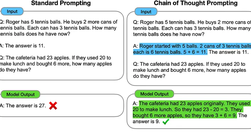

"Most of us use ChatGPT wrong. We don’t include examples in our prompts. We ignore that we can control ChatGPT’s behavior with roles. We let ChatGPT guess stuff instead of providing it with some information... We need ... high-quality prompts ... [so here's] 4 techniques used in prompt engineering."There's even a video."Few shot standard prompts .…

" a step-by-step guide for building a document Q&A chatbot in an efficient way with llama-index and GPT API... ask the bot in natural language about your own documents/data... [see it] retrieving info from the documents and generating a response [1]... customer support, synthesizing user research, your personal knowledge management"Key points:"fin…

"ChatGPT API will allow developers to integrate ChatGPT into their own applications, products, or services". Not to be confused with ChatGPT Plus:"API has its own pricing... https://openai.com/pricing.ChatGPT Plus subscription covers usage on chat.openai.com only and costs $20/month."ChatGPT API prices:"Free trial users: 20 RPM 40000 TPMPay-as-you…

"You Are Not a Parrot and a chatbot is not a human" - an interview/profile of Emily M. Bender, the "computational linguist at the University of Washington ... [who] co-wrote the octopus paper... to illustrate what ... LLMs ... can and cannot do"The paper: "Climbing Towards NLU: On Meaning, Form, and Understanding in the Age of Data'... NLU = natur…

Noam Chomsky on ChatGPT, Bard and Sydney, which "take huge amounts of data, search for patterns in it and become increasingly proficient at generating statistically probable outputs — such as seemingly humanlike language and thought... hailed as the first glimmers on the horizon of artificial general intelligence ... surpassing human ... intellect…

"Microsoft imagines helping clients launch new chatbots or refine their existing ones", using a version of ChatGPT trained on post-2021 content. "The service should also provide citations to specific resources... give customers ways to upload their own data and refine the voice of their chatbots... replace Microsoft and OpenAI branding"

ChatGPT-powered, the "‘My AI’ bot will ... initially only available for $3.99 a month Snapchat Plus subscribers.., eventually all... we’re going to talk to AI every day" - useful, if you're a messaging service.It's a "fast mobile-friendly version of ChatGPT inside Snapchat... trained to adhere to the company’s trust and safety guidelines... stripp…

While "Wolfram Alpha... seeks to distill scientific facts and perform calculations", ChatGPT and other similar AIs "build statistical models and string together sentences or pictures by calculating probabilities (of what the next word should be, for example). That has led to all kinds of mistakes ... Wolfram is not optimistic that the developers w…

"What if we were to think of LLMs not as tools for answering questions, but as tools for asking us questions and inspiring our creativity? ... even simple tools can lead to interesting results when they clash with the contents of our minds"So he tries using ChatGPT as a muse. TL:DR; "ChatGPT asked me probing questions, suggested specific challenge…

When James West "joined a small wave of users granted early access" to the new Bing (I'll call and tag it "BingGPT"), powered by the same LLM behind ChatGPT, which is "a great party trick ... powerful work tool, capable of jumpstarting creativity, automating mundane tasks", he soon "noticed strange inconsistencies... dangerously convincing falseho…

Jeff Jarvis is not a fan of Ted Chiang's piece on CgatGPT, although he does admit TC (one of my favourite authors) "makes a clever comparison between lossy compression... and large-language models, which learn from and spit back but do not record the entire web". Indeed, he takes the metaphor further: "what is journalism itself but lossy compressi…

Interesting, illuminating (but contested) metaphor for thinking about LLMs from one of my favourite authors, Ted Chiang:"Think of ChatGPT as a blurry jpeg of all the text on the Web. It retains much of the information... but, if you’re looking for an exact sequence of bits, you won’t find it; all you will ever get is an approximation... nonsensica…

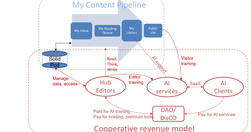

The 3rd part of my 1/1/2023 bundle of 5 posts looks at how AI could turbocharge collective intelligence "and finance the resulting ecosystem, providing an alternative to Big Tech AI monopolies".

This piece captures and then explores exactly what I've always thought about the future direction of myhub.ai: imagine what would happen if you had your own personal AI assistant operating across your content - your public posts, private library (including content shared from friends, stuff in your reading queue, etc.) and the wider web, emphasisi…

"Ipop gloop glog bluba droma floom gloope splog slopa" - This sentence means "The happy slime sees the earth under the sky while eating the slime's food" in Glorp. Here's the breakdown of the sentence:ChatGPT and I invent a fictional language spoken by slime-people... It understands subordinate clauses ... so understands at least one level of recu…

Is ChatGPT creator OpenAI "a potential Google slayer. Why look up something on a search engine when ChatGPT can write a whole paragraph explaining the answer?" It's very capable, but "struggles to distinguish between truth and falsehood... often a persuasive liar... a bit like autocomplete on your phone... trained on pretty much all of the web... …

"WordPress developer Johnathon Williams ... demonstrated that, with a little bit of expert guidance, ChatGPT can drastically reduce the amount of time it takes to extend WordPress... the plugin [written by GPT] ... worked on the first try... the best results from asking ChatGPI to generate entire functions versus specific filters or actions... [so…

Another piece pointing out that ChatGPT, while "easily the most impressive text-generating demo to date... trained through a mix of crunching billions of text documents and human coaching", should not be trusted."a generative AI is what it eats", and ChatGPT ate countless biases in the content it processed. "No one should mistake the imitation of …

The claims re: ChatGPT by "fascinated evangelists... [that they] contain “humanity’s scientific knowledge,” are approaching artificial general intelligence ... even consciousness", are simply "a distraction from the actual harm perpetuated by these systems. People get hurt from the very practical ways such models fall short in deployment".This exc…

"ChatGPT is not overly reliable... surprising how often ... right, but they can also be plausible and wrong a lot of the time, and downright obviously wrong some of the time... responding with errors of fact, ... responses that may only be true for certain (biased) contexts... wary of providing evidence or citations ... [but] happy to make up cita…

"I spent a few minutes in conversation with ChatGPT."This was both me kicking ChatGPT's conversational tyres and exploring its limits, both those it admits to and those it does not.Key takeaways from a first reading:Many, but not all, content creators are screwedFor "writers, journalists, copywriters, consultants, and even public servants.... Chat…

ChatGPT... is going to change our world much sooner than we expect, and much more drastically

Relevant Overviews

- Content Strategy

- Online Community Management

- Social Media Strategy

- Content Creation & Marketing

- Digital Transformation

- Blockchain, Crypto, NFTs etc

- Personal Productivity

- Innovation Strategy

- Communications Tactics

- Psychology

- Social Web

- Media

- Politics

- Communications Strategy

- Science&Technology

- Business

- Large language models