Navigating the Jagged Technological Frontier: Field Experimental Evidence of the Effects of AI on Knowledge Worker Productivity and Quality

my notes ( ? )

In the Boston Consulting Group AI experiment: "we examine the performance implications of AI on realistic, complex, and knowledge-intensive tasks... subjects were randomly assigned to no AI access; GPT-4 AI access; and GPT-4 AI access with a prompt engineering overview" (or GPT + Overview).

Need to map "a “jagged technological frontier” where some tasks are easily done by AI, while others, though seemingly similar in difficulty", are not suitable for AI support. "Professionals who skillfully navigate this frontier gain large productivity benefits ... while AI can actually decrease performance when used for work outside of the frontier".

Inside the frontier

"The inside-the-frontier experiment focused on creative product innovation and development." Benefits within the frontier:

- "consultants using AI ... completed 12.2% more tasks on average, and completed task 25.1% more quickly... 40% higher quality"

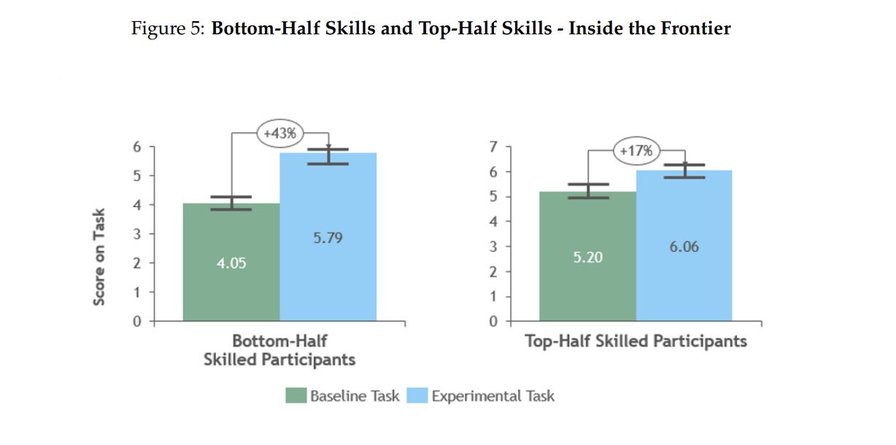

- lower-skilled consultants benefited 43%, higher-skilled 17% "compared to their own scores...

- GPT + Overview treatment consistently exhibits a more pronounced positive effect compared to GPT Only"

Creativity vs regression to the mean

However, while using AI "produce ideas of higher quality... there is a marked reduction in the variability ... [and so] might lead to more homogenized outputs."

Outside the frontier

Risks outside the frontier: "consultants using AI were 19% less likely to produce correct solutions compared to those without AI... with the GPT + Overview group experiencing a more pronounced decrease".

However, wrt recommendation quality, "subjects using AI ... consistently outperformed those not using AI".

"this study highlights the importance of validating and interrogating AI ... and of continuing to exert cognitive effort and experts’ judgment... Professionals who had a negative performance ... tended to blindly adopt its output and interrogate it less (“unengaged interaction with AI”".

Two successful usage patterns

(More in Appendix E).

Centaurs

("allowed them to leverage AI ... optimizing their decision-making process while maintaining a significant level of human input and oversight" - Harpa)

"divide and delegate their solution-creation activities to the AI or to themselves... highly attuned to the jaggedness of the frontier ... dividing the tasks into sub-tasks ... best suited for [either] human intervention [or] efficiently managed by AI."

Even those sub-tasks they allocate to themselves are nevertheless supported by AI, but they remain in the loop.

Example: "used human knowledge to complete the task of generating a recommendation ... switched to AI to draft a memo".

Cyborgs

("deploying it as an intrinsic part of their decision-making process... harnessed AI... as a collaborative partner, continually interacting with the technology to optimize their performance and outcomes" - Harpa).

Completely integrate with the AI, "intertwine their efforts with AI at the very frontier of capabilities... at the subtask level". Common practices: "

- Assigning a persona

- Asking AI to make editorial changes to the outputs AI has produced

- Teaching through examples: Giving example of correct answer before asking AI a question

- Modularizing tasks

- Validating: Asking AI to check its inputs, analysis, and outputs

- Demanding logic explanation

- Exposing Contradictions

- Elaborating

- Directing a Deep dive

- Adding user’s own data... to re-do the analysis in iterative cycles

- Pushing back: Disagreeing ... ask AI to reconsider"

Conclusions

"move beyond the dichotomous decision of adopting or not AI ... focus on the knowledge workflow and the tasks within it, and in each of them, evaluate the value of using different configurations and combinations of humans and AI".

To tackle "diminished diversity of ideas ... consider employing a variety of AI models, possibly multiple LLMs, or increased human-only involvement ... underscores the significance of maintaining a diverse AI ecosystem". It also depends on the organisation: many will want greater productivity and more uniformly high quality; "others might value maximum exploration and innovation".

Moreover, when everyone's using AI, "outputs generated without AI assistance might stand out ... due to their distinctiveness."

More in Ethan's tweet.

Read the Full Post

The above notes were curated from the full post papers.ssrn.com/sol3/papers.cfm?abstract_id=4573321.Related reading

More Stuff I Like

More Stuff tagged llm , harpa , ethan mollick , quality , boston consulting group , productivity , ai , management , centaur , jagged frontier , cyborg , innovation

See also: Digital Transformation , Change & Project Management , Personal Productivity , Innovation Strategy , Science&Technology , Business , Large language models