Almost an Agent: What GPTs can do - by Ethan Mollick

my notes ( ? )

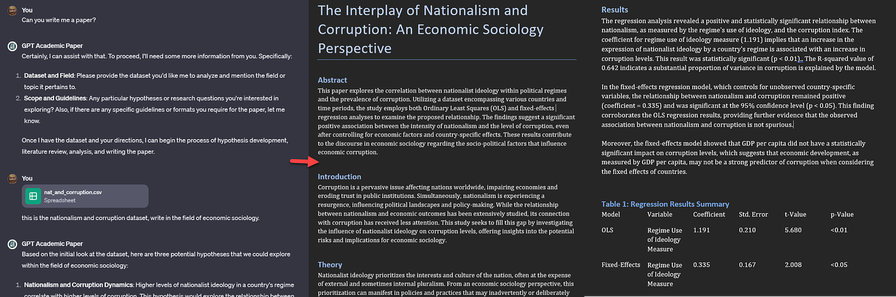

In the wake of ChatGPT's release of GPTs, Mollick asks: "What would a real AI agent look like? A simple agent that writes academic papers would, after being given a dataset and a field of study, read about how to compose a good paper, analyze the data, conduct a literature review, generate hypotheses, test them, and then write up the results... you get a Word document" - which is exactly what he did after kicking the tyres.

"GPTs aren’t autonomous agents yet. I had to give feedback ... GPTs still have hallucinations and other issues", but nevertheless:

- "GPT system makes structured prompts more powerful and much easier to create, test, and share"

- they're a precursor to truly (trustworthy) agents, but also...

- "suggest new future vulnerabilities and risks".

Using "GPT Builder... the AI helps you create a GPT through conversation", you can preview the result and iterate. Based on the conversation, the AI creates "a detailed configuration of the GPT, which I can also edit manually. The core ... is a structured prompt [plus] ... additional features... The [resulting] GPT ... is pretty good. But it isn’t amazing... To really build a great GPT, you are going to need to modify or build the structured prompt yourself. "

While you can provide documents to work from, it still hallucinates: "I had no warning that these mistakes happened, and would not have noticed them if I wasn’t cross-referencing".

We can now create GPTs and share them with the world: "communities and organizations can begin to work together to create a set of agents that can be useful for work and school". He provides an example writing coach agent, and "will be creating custom GPTs for every session of the classes I teach... simulations ... tutors or mentors [maybe]... teammates or assignments".

What of the risks? " GPTs can be easily integrated into with other systems ... a problem because AIs are incredibly gullible"

Read the Full Post

The above notes were curated from the full post www.oneusefulthing.org/p/almost-an-agent-what-gpts-can-do.Related reading

More Stuff I Like

More Stuff tagged llm , ai-agent-gpt , ethan mollick , openai

See also: Large language models